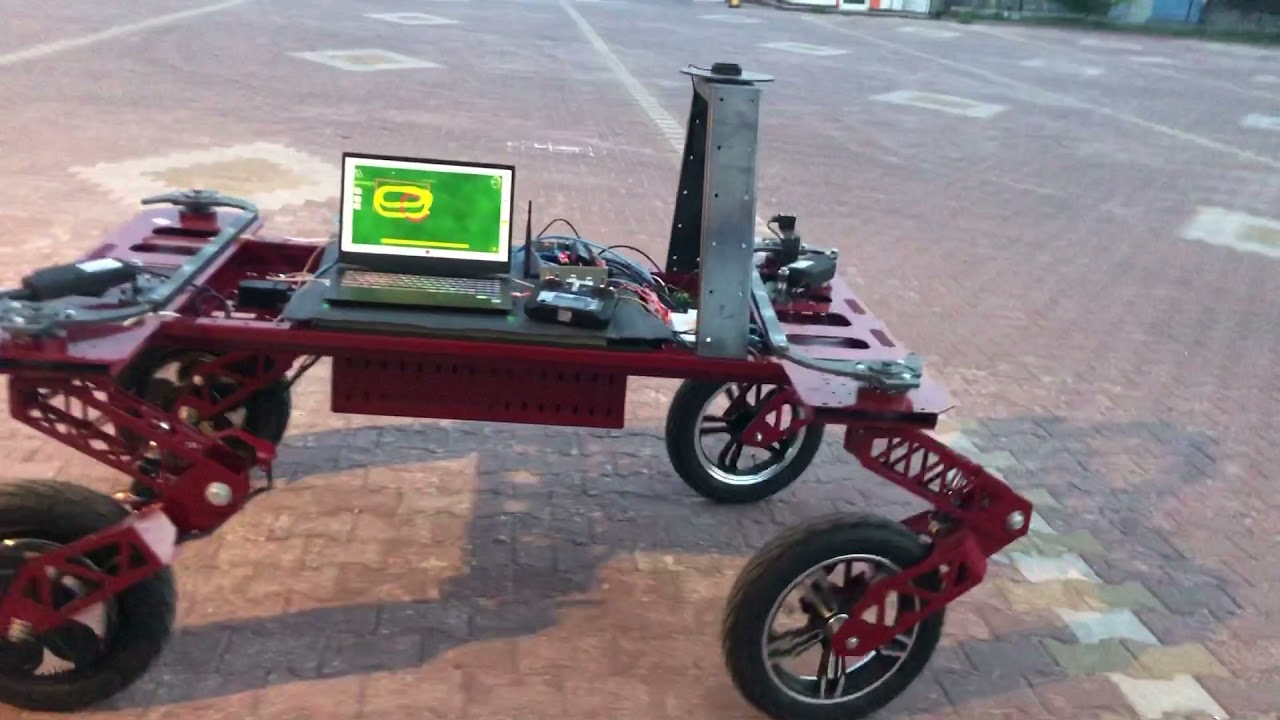

I used 2 simplertk2b xlr, set up my own base station, I used Linak actuator for front and rear steering (750N) for WAS RQH100030, wheelbase:150cm., track:100cm., Hub motors 3 kW. power of 2 units (6 kW), 6 units of 30 A. 12V. battery (72V. 30 A.) BNO085 and UDP for the whole system, my aim is to put a 600 liter tank on the vehicle and make a spray boom using aluminum sigma profile that opens and closes with a 10 Meter long linear actuator. Fine adjustments are in progress now, I plan to do obstacle detection with ultrasonic sensor, Lidar and depth camera in the future, using rate control flow meter or by installing PWM spray nozzle (5-50 Hz.), This tool has been my dream for years and thanks to you, I succeeded. that you are. Best regards.

Thank you so much to everyone who helped me and I forgot to write their name, I love this group very much thanks to you, everyone is respectful and everybody is very knowledgeable, @Brian Tischler, Muammer EKREM @Ray, @Jorgensen, @Weder, @Math, @Matthias Hammer, @Gianluca, Andreas Ortner,

@Benjamin, @Potato Farmer, @Baraki, M_Elias, Vili, Kent Stuff, Damien, BlueRabbit, Torriem, GoRoNb, Bennet, Pat, Larsvest, CommonRail, KansasFarmer, Kaupoi, Alan Webb, Jcmach.